-

Online & In-Person

Global South AI Safety Hackathon

AI safety research is concentrated in a handful of countries. This hackathon changes that. Build AI safety tools, evaluations, and policy research from Latin America, Africa, or Asia, compete within your region, and join a pipeline from hackathon to fellowship to placement. With Support from Schmidt Sciences.

32

Days To Go

AI safety research is concentrated in a handful of countries. This hackathon changes that. Build AI safety tools, evaluations, and policy research from Latin America, Africa, or Asia, compete within your region, and join a pipeline from hackathon to fellowship to placement. With Support from Schmidt Sciences.

This event is ongoing.

This event has concluded.

Sign Ups

Entries

Overview

Resources

Guidelines

Entries

Overview

The Global South AI Safety Hackathon brings together researchers, engineers, and policy professionals across Latin America, Africa, and Asia to work on AI safety problems that matter most in their regions. Over one weekend, participants build tools, evaluations, and policy research addressing gaps the field has overlooked.

The hackathon is designed for and with the Global South. Participants compete within their region, not globally.

Why the Global South?

AI safety research is concentrated in a handful of countries. None of the top 100 institutions by AI publication index, in either universities or companies, are based in Africa or Latin America (Chan et al., 2021). Meanwhile, AI risks hit differently in these regions: jailbreaks are more common in low-resource languages, and algorithmic bias trained on non-local data shows up in healthcare and hiring deployments.

This hackathon is not about bringing AI safety to the Global South. It is about bringing the Global South into AI safety. Researchers here have contextual knowledge the field needs: regulatory landscapes, language gaps, and institutional constraints that determine whether safety research actually works in practice.

Regional Tracks

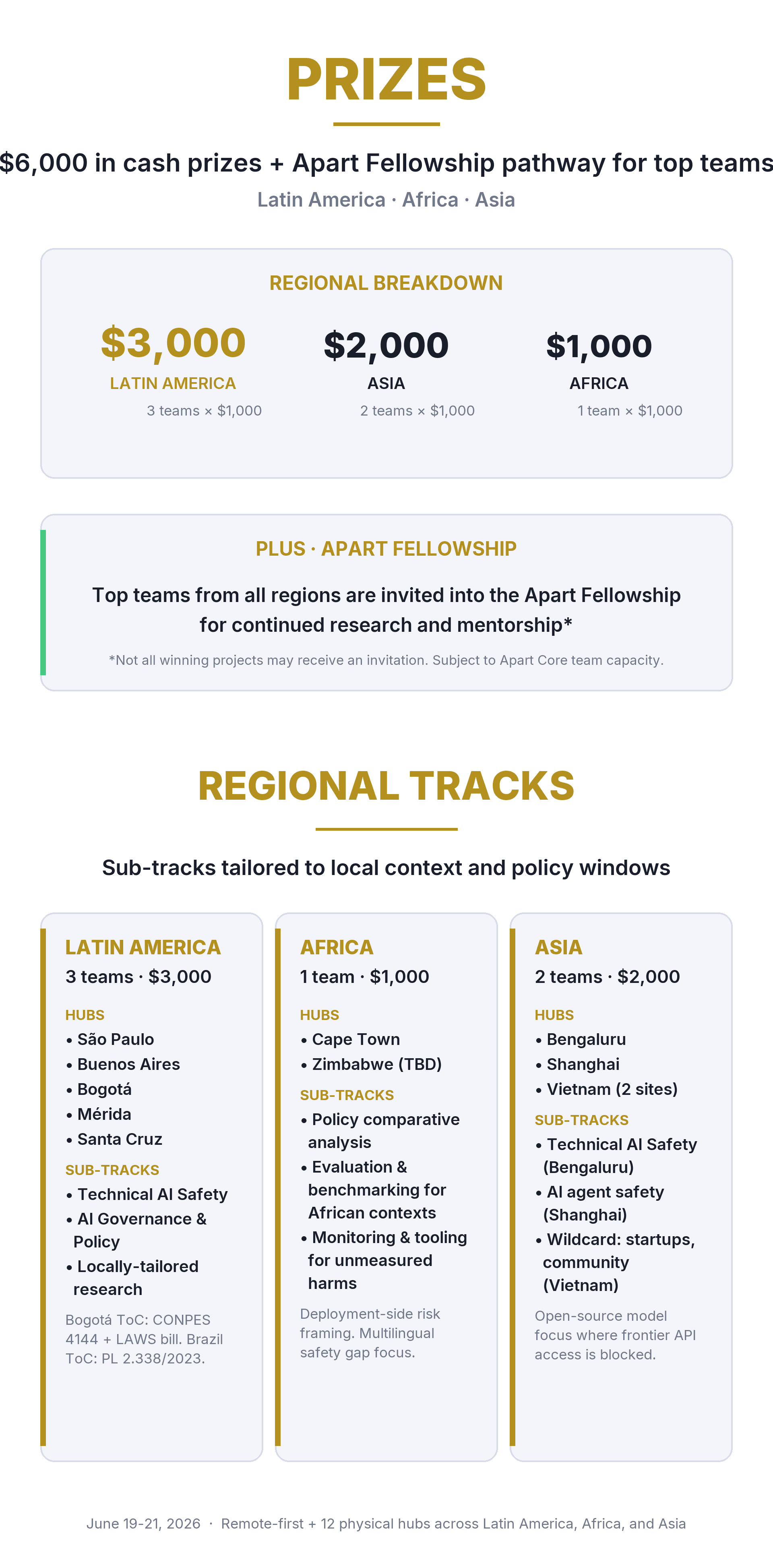

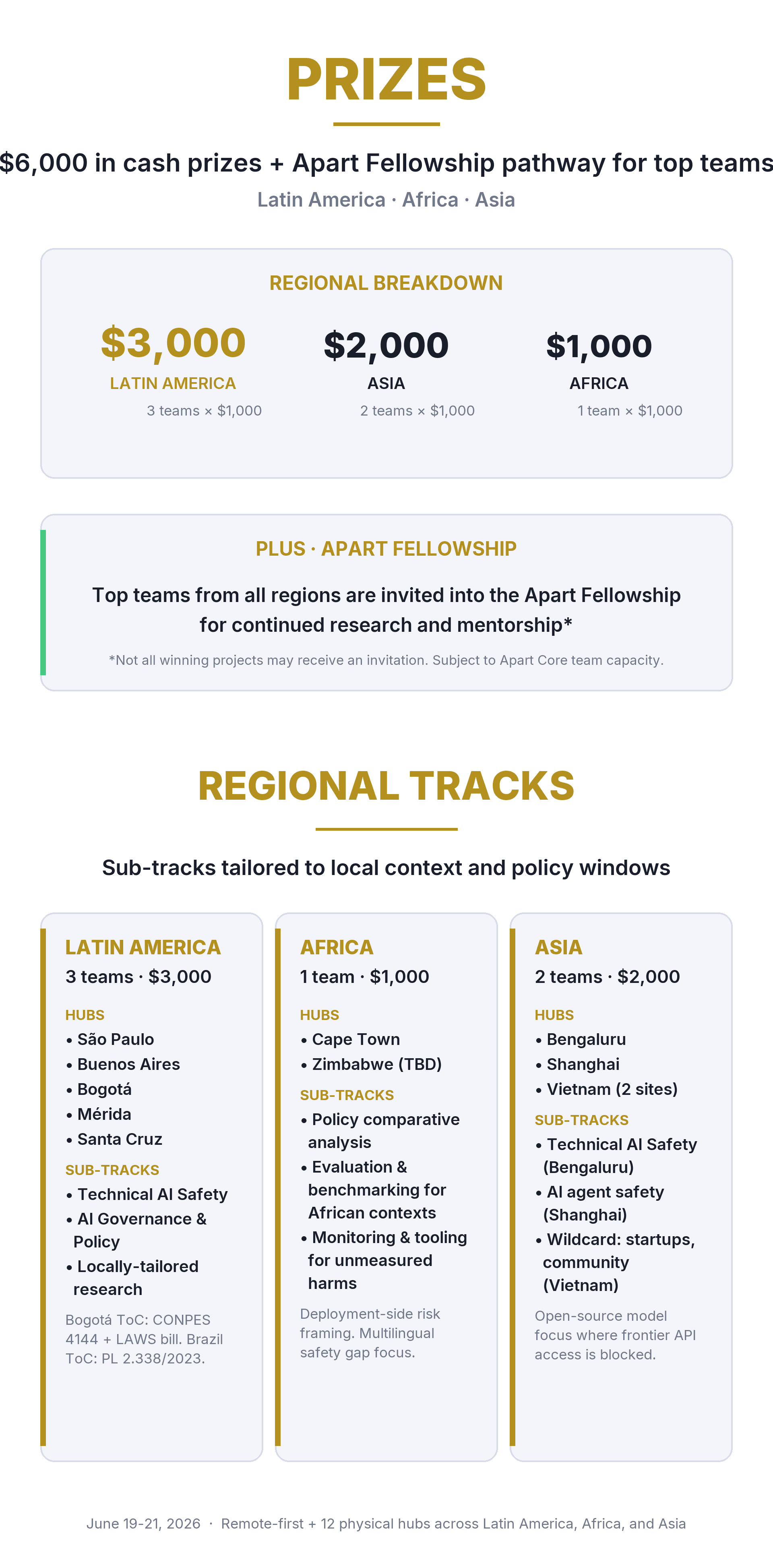

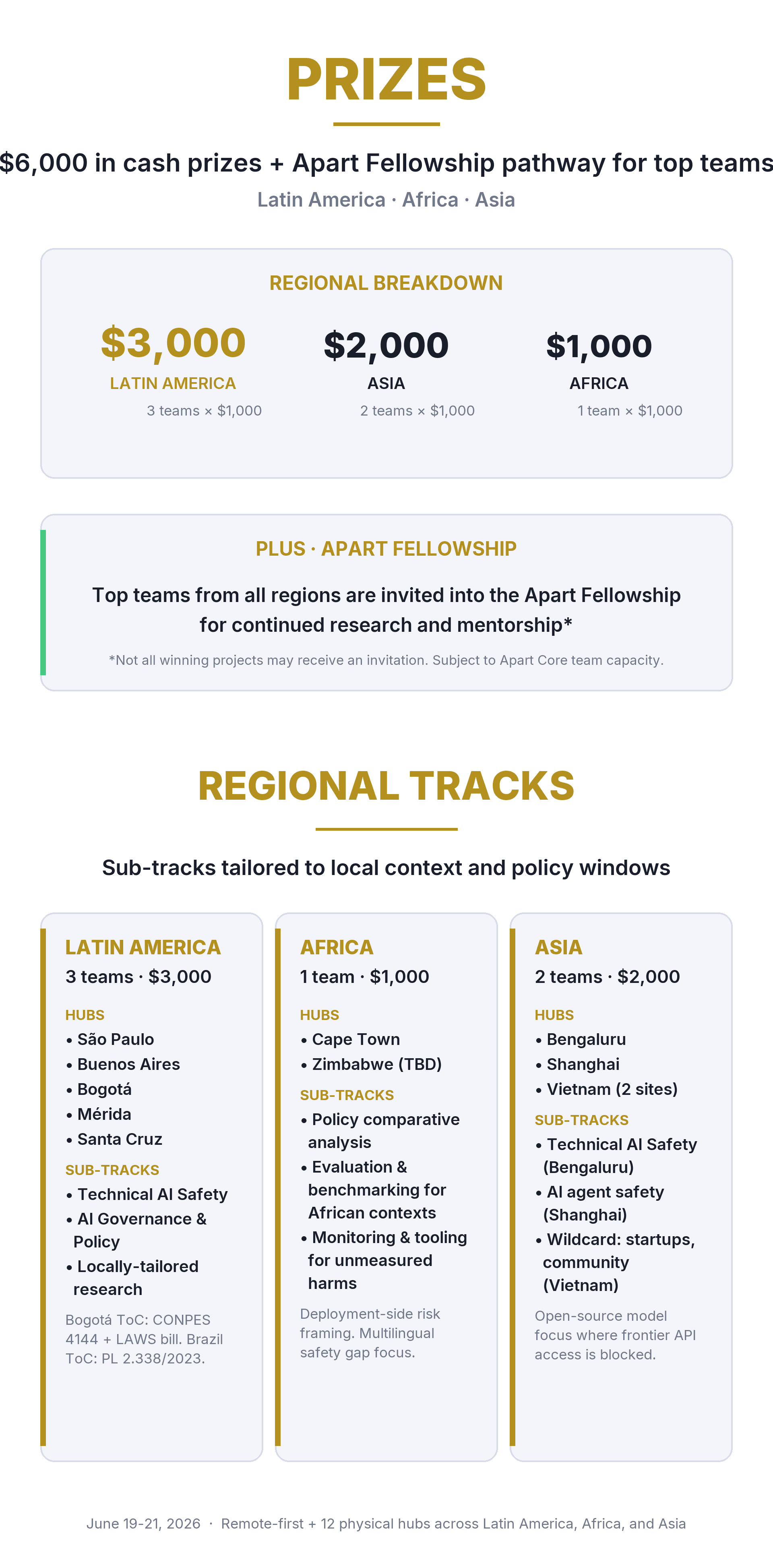

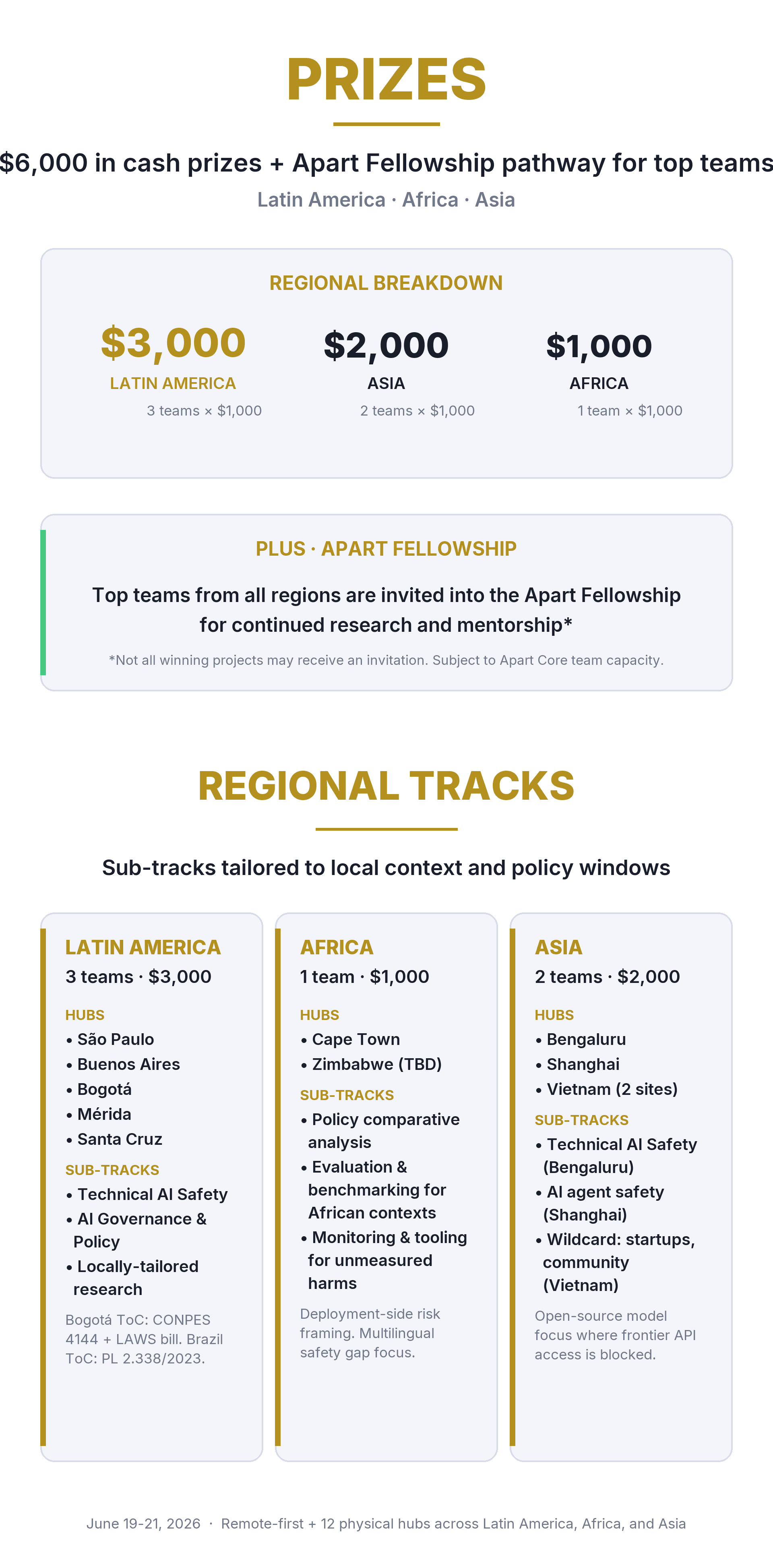

Participants compete within their region. Each region has its own winners and prizes. Within each regional track, participants choose a sub-track (Technical AI Safety, AI Governance/Policy, or a locally-tailored sub-track defined by their hub).

Track: Latin America

Hubs in São Paulo, Buenos Aires, Bogotá, Mérida, and Guadalajara, with Santa Cruz (Bolivia) in conversation. Three winning teams ($3,000 total).

Brazil's AI bill (PL 2.338/2023), approved by the Senate and pending Chamber vote, includes a standalone human rights chapter that goes beyond the EU AI Act. Chile became the first country in the world to constitutionally protect neuro-rights. Colombia's CONPES 4144 established a national AI policy framework in early 2025. Projects in this track can address technical safety, governance, or locally tailored problems like AI fairness for Portuguese and Spanish language models, regulatory analysis of emerging legislation, or safety evaluations for AI systems deployed in Latin American contexts.

Track: Africa

Hubs in Cape Town and Tanzania, with Zimbabwe in conversation. One winning team ($1,000 total).

The global AI safety movement has focused on constraining frontier development in the US and China, leaving the deployment-side risks that affect Africa largely unaddressed. The tractable agenda is managing deployment risks: misuse resilience, differential defence acceleration, and mitigating gradual disempowerment. African countries face a distinctive risk profile: deepfake-driven electoral interference, data-colonial dependency on foreign infrastructure, compute and semiconductor scarcity constraining sovereign AI capacity, and large-scale labour market disruptions. The benchmarks, evaluation frameworks, and policy instruments produced by leading safety institutes don't transfer to African languages, sectors, or social dynamics.

We invite submissions in three archetypes:

Policy: Comparative analysis of how African nations' draft AI policies address specific harms, with concrete implementation-grounded recommendations.

Evaluation and benchmarking: Build on existing test suites or prototype new evals that transfer to African-context risks.

Monitoring and tooling: Prototypes for surfacing harms not currently being captured, such as community-level monitoring.

Track: Asia

Hubs in Bengaluru, India (SAFL), Shanghai, and Vietnam (Hanoi and Ho Chi Minh City). Two winning teams ($2,000 total).

Asia spans the full spectrum of AI governance approaches. India's "Seven Sutras" framework explicitly prioritizes innovation over restraint. China has enacted more sector-specific AI regulations than any other country. Vietnam's AI law took effect in March 2026, the first binding AI legislation in Southeast Asia. The ASEAN Guide on AI Governance and Ethics offers a voluntary regional framework. Projects can address cross-border governance harmonization, safety evaluations for non-English language models, technical AI safety research, or region-specific risk assessments.

Who should participate?

AI safety researchers and engineers

Machine learning researchers and engineers

Policy researchers working on AI governance

Software engineers interested in safety infrastructure

Security researchers and red teamers

Students and early-career researchers exploring AI safety

Anyone working on AI and its impacts in the Global South

What you will do

Over three days, you will:

Form teams and choose a regional track and sub-track

Research and scope a specific problem using the provided resources

Build a project: a tool, evaluation, policy analysis, or research contribution

Submit a research report (PDF) documenting your approach, results, and implications

Have your work reviewed by judges from AI safety organizations, universities, and policy institutions in your region

What happens next

After the hackathon, all submitted projects are reviewed by expert judges. Top projects receive prizes. The best teams may be invited into the Apart Fellowship for continued research and mentorship (subject to the asterisked caveat in Overview).

Organized by

Apart Research

Local hubs (13):

Latin America: BAISH (Buenos Aires) | EA Brazil (São Paulo) | AI Safety Colombia (Bogotá) | AISMX (Mérida and Guadalajara) | Santa Cruz Hub (Bolivia, in conversation)

Africa: AI Safety South Africa (Cape Town) | CAIMSA (Tanzania) | AI Safety Zimbabwe (in conversation)

Asia: Electric Sheep (Bengaluru) | SAFL (India) | Open Community for AI Safety China (Shanghai) | AnToàn.AI (Hanoi and Ho Chi Minh City)

Supported by Schmidt Sciences.

Sign Ups

Entries

Overview

Resources

Guidelines

Entries

Overview

The Global South AI Safety Hackathon brings together researchers, engineers, and policy professionals across Latin America, Africa, and Asia to work on AI safety problems that matter most in their regions. Over one weekend, participants build tools, evaluations, and policy research addressing gaps the field has overlooked.

The hackathon is designed for and with the Global South. Participants compete within their region, not globally.

Why the Global South?

AI safety research is concentrated in a handful of countries. None of the top 100 institutions by AI publication index, in either universities or companies, are based in Africa or Latin America (Chan et al., 2021). Meanwhile, AI risks hit differently in these regions: jailbreaks are more common in low-resource languages, and algorithmic bias trained on non-local data shows up in healthcare and hiring deployments.

This hackathon is not about bringing AI safety to the Global South. It is about bringing the Global South into AI safety. Researchers here have contextual knowledge the field needs: regulatory landscapes, language gaps, and institutional constraints that determine whether safety research actually works in practice.

Regional Tracks

Participants compete within their region. Each region has its own winners and prizes. Within each regional track, participants choose a sub-track (Technical AI Safety, AI Governance/Policy, or a locally-tailored sub-track defined by their hub).

Track: Latin America

Hubs in São Paulo, Buenos Aires, Bogotá, Mérida, and Guadalajara, with Santa Cruz (Bolivia) in conversation. Three winning teams ($3,000 total).

Brazil's AI bill (PL 2.338/2023), approved by the Senate and pending Chamber vote, includes a standalone human rights chapter that goes beyond the EU AI Act. Chile became the first country in the world to constitutionally protect neuro-rights. Colombia's CONPES 4144 established a national AI policy framework in early 2025. Projects in this track can address technical safety, governance, or locally tailored problems like AI fairness for Portuguese and Spanish language models, regulatory analysis of emerging legislation, or safety evaluations for AI systems deployed in Latin American contexts.

Track: Africa

Hubs in Cape Town and Tanzania, with Zimbabwe in conversation. One winning team ($1,000 total).

The global AI safety movement has focused on constraining frontier development in the US and China, leaving the deployment-side risks that affect Africa largely unaddressed. The tractable agenda is managing deployment risks: misuse resilience, differential defence acceleration, and mitigating gradual disempowerment. African countries face a distinctive risk profile: deepfake-driven electoral interference, data-colonial dependency on foreign infrastructure, compute and semiconductor scarcity constraining sovereign AI capacity, and large-scale labour market disruptions. The benchmarks, evaluation frameworks, and policy instruments produced by leading safety institutes don't transfer to African languages, sectors, or social dynamics.

We invite submissions in three archetypes:

Policy: Comparative analysis of how African nations' draft AI policies address specific harms, with concrete implementation-grounded recommendations.

Evaluation and benchmarking: Build on existing test suites or prototype new evals that transfer to African-context risks.

Monitoring and tooling: Prototypes for surfacing harms not currently being captured, such as community-level monitoring.

Track: Asia

Hubs in Bengaluru, India (SAFL), Shanghai, and Vietnam (Hanoi and Ho Chi Minh City). Two winning teams ($2,000 total).

Asia spans the full spectrum of AI governance approaches. India's "Seven Sutras" framework explicitly prioritizes innovation over restraint. China has enacted more sector-specific AI regulations than any other country. Vietnam's AI law took effect in March 2026, the first binding AI legislation in Southeast Asia. The ASEAN Guide on AI Governance and Ethics offers a voluntary regional framework. Projects can address cross-border governance harmonization, safety evaluations for non-English language models, technical AI safety research, or region-specific risk assessments.

Who should participate?

AI safety researchers and engineers

Machine learning researchers and engineers

Policy researchers working on AI governance

Software engineers interested in safety infrastructure

Security researchers and red teamers

Students and early-career researchers exploring AI safety

Anyone working on AI and its impacts in the Global South

What you will do

Over three days, you will:

Form teams and choose a regional track and sub-track

Research and scope a specific problem using the provided resources

Build a project: a tool, evaluation, policy analysis, or research contribution

Submit a research report (PDF) documenting your approach, results, and implications

Have your work reviewed by judges from AI safety organizations, universities, and policy institutions in your region

What happens next

After the hackathon, all submitted projects are reviewed by expert judges. Top projects receive prizes. The best teams may be invited into the Apart Fellowship for continued research and mentorship (subject to the asterisked caveat in Overview).

Organized by

Apart Research

Local hubs (13):

Latin America: BAISH (Buenos Aires) | EA Brazil (São Paulo) | AI Safety Colombia (Bogotá) | AISMX (Mérida and Guadalajara) | Santa Cruz Hub (Bolivia, in conversation)

Africa: AI Safety South Africa (Cape Town) | CAIMSA (Tanzania) | AI Safety Zimbabwe (in conversation)

Asia: Electric Sheep (Bengaluru) | SAFL (India) | Open Community for AI Safety China (Shanghai) | AnToàn.AI (Hanoi and Ho Chi Minh City)

Supported by Schmidt Sciences.

Sign Ups

Entries

Overview

Resources

Guidelines

Entries

Overview

The Global South AI Safety Hackathon brings together researchers, engineers, and policy professionals across Latin America, Africa, and Asia to work on AI safety problems that matter most in their regions. Over one weekend, participants build tools, evaluations, and policy research addressing gaps the field has overlooked.

The hackathon is designed for and with the Global South. Participants compete within their region, not globally.

Why the Global South?

AI safety research is concentrated in a handful of countries. None of the top 100 institutions by AI publication index, in either universities or companies, are based in Africa or Latin America (Chan et al., 2021). Meanwhile, AI risks hit differently in these regions: jailbreaks are more common in low-resource languages, and algorithmic bias trained on non-local data shows up in healthcare and hiring deployments.

This hackathon is not about bringing AI safety to the Global South. It is about bringing the Global South into AI safety. Researchers here have contextual knowledge the field needs: regulatory landscapes, language gaps, and institutional constraints that determine whether safety research actually works in practice.

Regional Tracks

Participants compete within their region. Each region has its own winners and prizes. Within each regional track, participants choose a sub-track (Technical AI Safety, AI Governance/Policy, or a locally-tailored sub-track defined by their hub).

Track: Latin America

Hubs in São Paulo, Buenos Aires, Bogotá, Mérida, and Guadalajara, with Santa Cruz (Bolivia) in conversation. Three winning teams ($3,000 total).

Brazil's AI bill (PL 2.338/2023), approved by the Senate and pending Chamber vote, includes a standalone human rights chapter that goes beyond the EU AI Act. Chile became the first country in the world to constitutionally protect neuro-rights. Colombia's CONPES 4144 established a national AI policy framework in early 2025. Projects in this track can address technical safety, governance, or locally tailored problems like AI fairness for Portuguese and Spanish language models, regulatory analysis of emerging legislation, or safety evaluations for AI systems deployed in Latin American contexts.

Track: Africa

Hubs in Cape Town and Tanzania, with Zimbabwe in conversation. One winning team ($1,000 total).

The global AI safety movement has focused on constraining frontier development in the US and China, leaving the deployment-side risks that affect Africa largely unaddressed. The tractable agenda is managing deployment risks: misuse resilience, differential defence acceleration, and mitigating gradual disempowerment. African countries face a distinctive risk profile: deepfake-driven electoral interference, data-colonial dependency on foreign infrastructure, compute and semiconductor scarcity constraining sovereign AI capacity, and large-scale labour market disruptions. The benchmarks, evaluation frameworks, and policy instruments produced by leading safety institutes don't transfer to African languages, sectors, or social dynamics.

We invite submissions in three archetypes:

Policy: Comparative analysis of how African nations' draft AI policies address specific harms, with concrete implementation-grounded recommendations.

Evaluation and benchmarking: Build on existing test suites or prototype new evals that transfer to African-context risks.

Monitoring and tooling: Prototypes for surfacing harms not currently being captured, such as community-level monitoring.

Track: Asia

Hubs in Bengaluru, India (SAFL), Shanghai, and Vietnam (Hanoi and Ho Chi Minh City). Two winning teams ($2,000 total).

Asia spans the full spectrum of AI governance approaches. India's "Seven Sutras" framework explicitly prioritizes innovation over restraint. China has enacted more sector-specific AI regulations than any other country. Vietnam's AI law took effect in March 2026, the first binding AI legislation in Southeast Asia. The ASEAN Guide on AI Governance and Ethics offers a voluntary regional framework. Projects can address cross-border governance harmonization, safety evaluations for non-English language models, technical AI safety research, or region-specific risk assessments.

Who should participate?

AI safety researchers and engineers

Machine learning researchers and engineers

Policy researchers working on AI governance

Software engineers interested in safety infrastructure

Security researchers and red teamers

Students and early-career researchers exploring AI safety

Anyone working on AI and its impacts in the Global South

What you will do

Over three days, you will:

Form teams and choose a regional track and sub-track

Research and scope a specific problem using the provided resources

Build a project: a tool, evaluation, policy analysis, or research contribution

Submit a research report (PDF) documenting your approach, results, and implications

Have your work reviewed by judges from AI safety organizations, universities, and policy institutions in your region

What happens next

After the hackathon, all submitted projects are reviewed by expert judges. Top projects receive prizes. The best teams may be invited into the Apart Fellowship for continued research and mentorship (subject to the asterisked caveat in Overview).

Organized by

Apart Research

Local hubs (13):

Latin America: BAISH (Buenos Aires) | EA Brazil (São Paulo) | AI Safety Colombia (Bogotá) | AISMX (Mérida and Guadalajara) | Santa Cruz Hub (Bolivia, in conversation)

Africa: AI Safety South Africa (Cape Town) | CAIMSA (Tanzania) | AI Safety Zimbabwe (in conversation)

Asia: Electric Sheep (Bengaluru) | SAFL (India) | Open Community for AI Safety China (Shanghai) | AnToàn.AI (Hanoi and Ho Chi Minh City)

Supported by Schmidt Sciences.

Sign Ups

Entries

Overview

Resources

Guidelines

Entries

Overview

The Global South AI Safety Hackathon brings together researchers, engineers, and policy professionals across Latin America, Africa, and Asia to work on AI safety problems that matter most in their regions. Over one weekend, participants build tools, evaluations, and policy research addressing gaps the field has overlooked.

The hackathon is designed for and with the Global South. Participants compete within their region, not globally.

Why the Global South?

AI safety research is concentrated in a handful of countries. None of the top 100 institutions by AI publication index, in either universities or companies, are based in Africa or Latin America (Chan et al., 2021). Meanwhile, AI risks hit differently in these regions: jailbreaks are more common in low-resource languages, and algorithmic bias trained on non-local data shows up in healthcare and hiring deployments.

This hackathon is not about bringing AI safety to the Global South. It is about bringing the Global South into AI safety. Researchers here have contextual knowledge the field needs: regulatory landscapes, language gaps, and institutional constraints that determine whether safety research actually works in practice.

Regional Tracks

Participants compete within their region. Each region has its own winners and prizes. Within each regional track, participants choose a sub-track (Technical AI Safety, AI Governance/Policy, or a locally-tailored sub-track defined by their hub).

Track: Latin America

Hubs in São Paulo, Buenos Aires, Bogotá, Mérida, and Guadalajara, with Santa Cruz (Bolivia) in conversation. Three winning teams ($3,000 total).

Brazil's AI bill (PL 2.338/2023), approved by the Senate and pending Chamber vote, includes a standalone human rights chapter that goes beyond the EU AI Act. Chile became the first country in the world to constitutionally protect neuro-rights. Colombia's CONPES 4144 established a national AI policy framework in early 2025. Projects in this track can address technical safety, governance, or locally tailored problems like AI fairness for Portuguese and Spanish language models, regulatory analysis of emerging legislation, or safety evaluations for AI systems deployed in Latin American contexts.

Track: Africa

Hubs in Cape Town and Tanzania, with Zimbabwe in conversation. One winning team ($1,000 total).

The global AI safety movement has focused on constraining frontier development in the US and China, leaving the deployment-side risks that affect Africa largely unaddressed. The tractable agenda is managing deployment risks: misuse resilience, differential defence acceleration, and mitigating gradual disempowerment. African countries face a distinctive risk profile: deepfake-driven electoral interference, data-colonial dependency on foreign infrastructure, compute and semiconductor scarcity constraining sovereign AI capacity, and large-scale labour market disruptions. The benchmarks, evaluation frameworks, and policy instruments produced by leading safety institutes don't transfer to African languages, sectors, or social dynamics.

We invite submissions in three archetypes:

Policy: Comparative analysis of how African nations' draft AI policies address specific harms, with concrete implementation-grounded recommendations.

Evaluation and benchmarking: Build on existing test suites or prototype new evals that transfer to African-context risks.

Monitoring and tooling: Prototypes for surfacing harms not currently being captured, such as community-level monitoring.

Track: Asia

Hubs in Bengaluru, India (SAFL), Shanghai, and Vietnam (Hanoi and Ho Chi Minh City). Two winning teams ($2,000 total).

Asia spans the full spectrum of AI governance approaches. India's "Seven Sutras" framework explicitly prioritizes innovation over restraint. China has enacted more sector-specific AI regulations than any other country. Vietnam's AI law took effect in March 2026, the first binding AI legislation in Southeast Asia. The ASEAN Guide on AI Governance and Ethics offers a voluntary regional framework. Projects can address cross-border governance harmonization, safety evaluations for non-English language models, technical AI safety research, or region-specific risk assessments.

Who should participate?

AI safety researchers and engineers

Machine learning researchers and engineers

Policy researchers working on AI governance

Software engineers interested in safety infrastructure

Security researchers and red teamers

Students and early-career researchers exploring AI safety

Anyone working on AI and its impacts in the Global South

What you will do

Over three days, you will:

Form teams and choose a regional track and sub-track

Research and scope a specific problem using the provided resources

Build a project: a tool, evaluation, policy analysis, or research contribution

Submit a research report (PDF) documenting your approach, results, and implications

Have your work reviewed by judges from AI safety organizations, universities, and policy institutions in your region

What happens next

After the hackathon, all submitted projects are reviewed by expert judges. Top projects receive prizes. The best teams may be invited into the Apart Fellowship for continued research and mentorship (subject to the asterisked caveat in Overview).

Organized by

Apart Research

Local hubs (13):

Latin America: BAISH (Buenos Aires) | EA Brazil (São Paulo) | AI Safety Colombia (Bogotá) | AISMX (Mérida and Guadalajara) | Santa Cruz Hub (Bolivia, in conversation)

Africa: AI Safety South Africa (Cape Town) | CAIMSA (Tanzania) | AI Safety Zimbabwe (in conversation)

Asia: Electric Sheep (Bengaluru) | SAFL (India) | Open Community for AI Safety China (Shanghai) | AnToàn.AI (Hanoi and Ho Chi Minh City)

Supported by Schmidt Sciences.

Registered Local Sites

Register A Location

Beside the remote and virtual participation, our amazing organizers also host local hackathon locations where you can meet up in-person and connect with others in your area.

The in-person events for the Apart Sprints are run by passionate individuals just like you! We organize the schedule, speakers, and starter templates, and you can focus on engaging your local research, student, and engineering community.

We haven't announced jam sites yet

Check back later

Our Other Sprints

-

Research

The Secure Program Synthesis Hackathon

This unique event brings together diverse perspectives to tackle crucial challenges in AI alignment, governance, and safety. Work alongside leading experts, develop innovative solutions, and help shape the future of responsible

Sign Up

-

Research

AIxBio Hackathon

This unique event brings together diverse perspectives to tackle crucial challenges in AI alignment, governance, and safety. Work alongside leading experts, develop innovative solutions, and help shape the future of responsible

Sign Up

Sign up to stay updated on the

latest news, research, and events

Sign up to stay updated on the

latest news, research, and events

Sign up to stay updated on the

latest news, research, and events

Sign up to stay updated on the

latest news, research, and events